Summary.

When trying to use AbleToExtract for extracting information from a pictures in a PDF-file into an Excel-worksheet we went into some problems. We investigated them ending up finding solutions in a practical way for all of them.

Background.

This summer we were asked to automate the process of getting paper based information into a data warehouse, by using scanned pages stored in a PDF-file. In an earlier post is the story of selecting a tool for doing the first part of the job. We found a tool called AbleToExtract that takes a PDF-file as input ending up with an Excel workbook containing OCR interpreted data put into feasible cells.

Our next step is to take this file and translate it into a worksheet for loading the data into the tables in the warehouse.

Looking for useful connections in data.

In the earlier manual process there had always been up to 0,5-1% in difference of the total sum of the invoice and the sum of the transaction read into the database. With that method we found getting it down lower was not worth the price, so this error has been accepted, but has not helped building confidence for the data. Now we put the goal much lower, max 0,1% if any.

The data in this application is discounts offered to a specific customer from a wholesaler but paid by the manufacturer. On a weekly or monthly basis these discounts are invoiced to the manufacturer from the wholesaler. This type of transactions are very common when the public sector is buying in the Swedish food market, for example for school meals.

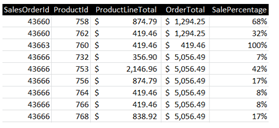

Looking firstly at the detail rows:

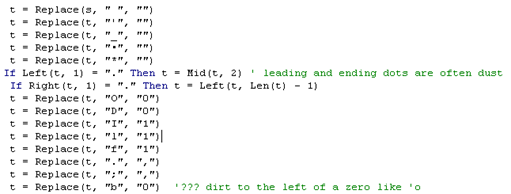

To the left is the name of the product sold, which is not needed since the products are stored in a product table in the DW. The OCR program is English so it does no take into account the three special Swedish characters ÅÄÖ. The next part is “ST” which is the unit used for the amounts. The next box contains the article number, for the wholesaler and for the producer (prefix AXX), and the last four numbers are quantity, and then the discount for each unit (repeated twice) and the product of quantity and discount producing a total for each row. So there is a lot of redundant information in each row that could be used to find and in many cases eliminate the errors in the OCR interpretation.

Starting with the product codes, which should be numeric when neglecting the prefix ‘AXX’. Both these numbers are stored in a DW table, so they are checked there. If the number does not hit in this table it produces an error message that has to be handled manually. If it get a hit on one of the numbers it is only reported as a possible error, if both numbers hit no error is assumed.

In the amount fields we have: If the two numbers representing discount/unit both are equal and numeric (dec. point i Sweden is ‘,’ ) they are believed to be correct. The next test is if the product is correct (quantity * disc/unit = rowtotal) if not, the quantity is checked if it is an integer, in that case the quantity is believed to be correct and the product is calculated. An error message is produced for all rows where this multiplication is incorrect, but with the extra assumptions the rate for manual corrections are minimized.

Let us have a look at the subtotal headers which occurs before a section with new detail lines:

The first number is a customer number in the ERP of the wholesaler followed by the name of the customer. Since a customer could have more than one contract there is a number of the contract to be picked up as well. Since both of these numbers have occurred in imports earlier the chance of getting a hit on them is pretty high. These numbers are collected in a table in the DW, so they could be checked for validity against that table. If no hit then they are reported as a new contract making it a possible OCR error, but also possibly representing a contract not yet entered in the DW table, so manual inspection is called for in this case, to ensure 100% validity. Of course fuzzy logic could be applied trying to match the customer name, but this has not been tested yet.

To make thing still a little bit more complicated the pages of the invoice are not in the correct order in the PDF-file. It always starts with the last page holding the total, probably because that is the best use for the accounting system, when used to check incoming invoices. We need the pages in the correct order to pick up subheading that was printed on the previous page if a page break occurred before a section was completely written, to guaranty that the correct customer is found.

The page problem.

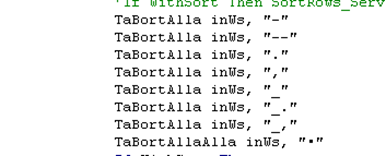

So we have to deal with the page problem first to ensure all rows appears in the worksheet in the correct order. “Page” in Swedish is “Sida”. We put up a filter in Excel to see where it occurs.

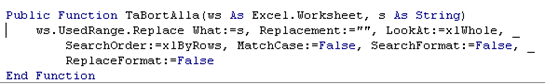

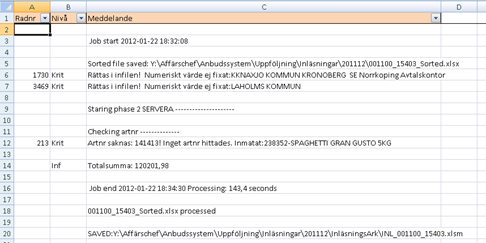

So here we see that this word i found in column E and F and sometimes even in D. As we looked further we also found it in column L on page 17! So it is reasonable to scan all occupied columns in a row, being unable to trust the column. We also discovered that sometimes the word to be found was “Side” due to dust problems. So the program has to be adaptable to variations in just finding this keyword to get rows correctly sorted. The idea is to put in two new column to the left putting in a page number and in the second the relative row within that page. When sorting on A and B we will get the correct order of the rows. For backtracking we put in the original row number so we easily could find the correct row when making manual corrections in the original file. We write the program and get:

We sort on column A and B and delete these columns to achieve:

Having the rows of the sheet in the correct order with the original row number in column A! Now we are ready for the next step.

In the mainframe era of the 70:is IMS was the model to structure the world – in hierarchies. Two decades later when OLAP went into sizing problems when calculating sums for all possible levels, hierarchy was again needed as a compromise. And now 20 years later the same occurs again, managing “Big data” the need for this narrow model is called for again. When you go into performance problems with computers, this is the rescue. “The world is hierarchical”, which sometimes is easy believed if you are naive, and sometimes might be an acceptable compromise.

In the mainframe era of the 70:is IMS was the model to structure the world – in hierarchies. Two decades later when OLAP went into sizing problems when calculating sums for all possible levels, hierarchy was again needed as a compromise. And now 20 years later the same occurs again, managing “Big data” the need for this narrow model is called for again. When you go into performance problems with computers, this is the rescue. “The world is hierarchical”, which sometimes is easy believed if you are naive, and sometimes might be an acceptable compromise. Working with DB design for decades I would say – it is a sacrifice to do. Far too many models fail.. The most classical example is, ok you have one boss, so this could be modelled with “H”. But what about Mr X who works in two projects as well.. He has a boss for his ordinary job, but with 25% he has another boss as well. But sales districts are such, except for Mr Y, whose nephew owns a shop outside his district, so he is of course responsible for that customer anyhow. But as Mr Naindu explains the setup of a disk and its directories could be modelled perfectly as a hierarchy, and with that I agree totally. You love how your files are organized, don’t you?

Working with DB design for decades I would say – it is a sacrifice to do. Far too many models fail.. The most classical example is, ok you have one boss, so this could be modelled with “H”. But what about Mr X who works in two projects as well.. He has a boss for his ordinary job, but with 25% he has another boss as well. But sales districts are such, except for Mr Y, whose nephew owns a shop outside his district, so he is of course responsible for that customer anyhow. But as Mr Naindu explains the setup of a disk and its directories could be modelled perfectly as a hierarchy, and with that I agree totally. You love how your files are organized, don’t you?  FAT was a compromise and we still have to live with it!! You know where you have stored your email in its folders? Most of what you observe in the world, the hierarchical approach means many compromises. Ok, “Bill of material” is good, but what more? Military might also be an exception where “map applies if the reality does not match”. A son could only have one father, you say, or? If you take other examples the compromises you got to accept sooner or later makes your database incapable of keeping up.

FAT was a compromise and we still have to live with it!! You know where you have stored your email in its folders? Most of what you observe in the world, the hierarchical approach means many compromises. Ok, “Bill of material” is good, but what more? Military might also be an exception where “map applies if the reality does not match”. A son could only have one father, you say, or? If you take other examples the compromises you got to accept sooner or later makes your database incapable of keeping up.