Simulating price setting

Many models for this process have been developed…

Many models for this process have been developed…

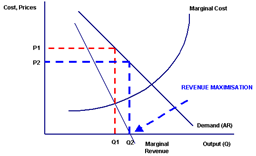

You work in a wholesale business and the purchase prices have changed. This happens all the time, but you want to stay in business. How do you make your best decisions? If you raise you know you might loose some customers, if you don’t you know your revenue will be hit. The problem of course is minor if prices go down, but this is more unusual.

My experience in this field is mostly from wholesales in the food industry. There Movex(M3) is the most common ERP-system used at least here in Sweden. You know you have all the facts you need at hand, but still many times you are pretty unsure if you do the correct changes to adapt. How much will our changes affect our revenue for this year?

My customers have agreements with their customer for half a year or for a quarter, when prices are supposed to be fixed. During this period unforeseen changes appear, exchange rates change, the harvest for some commodities fail due to whether conditions etc etc. So your revenue expectations change all the time. 2 to 4 times a year you have the chance to do adjustments, this is the process I will cover in some posts to follow. How to use Excel, Movex and a relational database to support this process to for helping you make the “best decisions”, or at least to a little bit better than your competitors.

My customers have agreements with their customer for half a year or for a quarter, when prices are supposed to be fixed. During this period unforeseen changes appear, exchange rates change, the harvest for some commodities fail due to whether conditions etc etc. So your revenue expectations change all the time. 2 to 4 times a year you have the chance to do adjustments, this is the process I will cover in some posts to follow. How to use Excel, Movex and a relational database to support this process to for helping you make the “best decisions”, or at least to a little bit better than your competitors.

Components in this “Movex-add in” are: collect relevant historical data, display three scenarios history, now and new. History is obviously what has happened during last year, now is the scenario where you do nothing and new is the scenario where you see what will happen with the changes you suggest. The model is Excel-based and you could easily specify three types of changes % on current price, % change on margin or exact amount per kg or unit. You could specify on a specific item or on a group of items, with Excel’s easy copy and past you make your changes category by category. Whenever you like to you ask the computer about the outcome so far by asking for a simulation. The simulation performed mimic most of M3’s rather complex price setting mechanism’s as price lists, discounts and bonus, based on current settings in M3. Then after each step you could analyse the result down to price list, customer group or a specific customer to see the outcome and to make feasible adjustments.

Components in this “Movex-add in” are: collect relevant historical data, display three scenarios history, now and new. History is obviously what has happened during last year, now is the scenario where you do nothing and new is the scenario where you see what will happen with the changes you suggest. The model is Excel-based and you could easily specify three types of changes % on current price, % change on margin or exact amount per kg or unit. You could specify on a specific item or on a group of items, with Excel’s easy copy and past you make your changes category by category. Whenever you like to you ask the computer about the outcome so far by asking for a simulation. The simulation performed mimic most of M3’s rather complex price setting mechanism’s as price lists, discounts and bonus, based on current settings in M3. Then after each step you could analyse the result down to price list, customer group or a specific customer to see the outcome and to make feasible adjustments.

Collecting Excel-data into a relational database

Looking for an escape from what often is called “Excel hell”. The solutions proposed are numerous. So saying I have the solution for you is of course not the correct way of convincing you.

For many years I have been working with customers whose high valued Excel-solution for solving a business problem has come to a dead-end. The reason for this differs, some of them are:

- The “Excel-guru” responsible has stopped working in the department or left the company

- The volume of data is far to high. Practical limit seems in many cases to be below 100’ rows.

- The data has become to complex. After a while many Excel-solutions start to handle interrelated data. Combining data from different sheets sooner or later becomes a hassle.

- Complex integration with ERP-system. There will sooner or later be a need to feed data into the ERP-system of the company. This means the database in the ERP has to be in sync with the database stored in Excel.

These factors combined with the ordinary problems in Excel, where functions exists but the use of them are poorly known by the persons in charge.

- Keep tables free from duplicates

- Joining data between two sheets avoiding orphans

- Handling many versions of the truth in different workbooks.

Together this means that sooner or later you get the understanding that a relation database is a better place to store your data. In these systems there is a good support for avoiding many of the pitfalls in Excel.

Together this means that sooner or later you get the understanding that a relation database is a better place to store your data. In these systems there is a good support for avoiding many of the pitfalls in Excel.

In no way I would like to criticize the Excel-solutions I have had the benefit of investigating in my career. All of them are built by gifted persons knowing the business problem very well. They just want to find ways of doing it a little bit smarter with the use of their computer. That was their intention from the beginning. After a while persons in the neighborhood starts too give them ideas of how to make the solution better and solve some more related business problems. They put in a new sheet or two they create another workbook for this special case. In the beginning these habits work very well and the process of development goes very quick including new functions. After a while the work most of their time as a system developer and leave their ordinary responsibilities.

On my webb-site I have used these characters to describe the phases I have seen many professionals enter when becoming “Excel-gurus”. The upper row is the positive version and the lower is the negative in the three steps described. As always the time dimension is horizontal.

Unfortunately the step in knowledge needed for designing a relational database is often high if you not have any education in computer science.

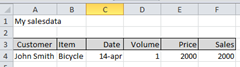

The relational data model is very similar to Excel, it handles data in tables (sheets). It has functions supporting you to avoid duplicate rows in the table, and it has functions for describing the relationships between the data stored in tables in different sheets and workbooks. A very simple model could be Customers, Sales and Products, which you in an initial Excel solution probably would store in one sheet:

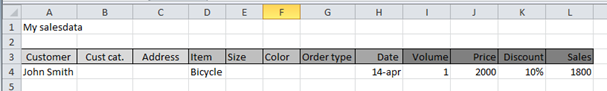

After a while you or someone else get the idea that it would be nice to have some more information about the customer and the items, you also find out that it would be valuable to have information about who took the order or if it was e-sales, and you would like to know the discount.. etc.

So the model grows and get wider and wider:

No problem the limit 256 columns is gone… The problem is that you are now staring to put in redundant information in your model. And if you do a misspelling of John’s name you will not discover he is a returning customer… As shown above you have put in one row the content of four different tables: Customer, Item, Invoice and Invoice row. Sooner or later this will give you trouble.

Response time at a computer

There are tons to read about performance with computers on internet. Milliseconds, latency, priority, MBS, IOPS, paging, CPU%…

There are tons to read about performance with computers on internet. Milliseconds, latency, priority, MBS, IOPS, paging, CPU%…

I can’t name them all. It is a science in itself.

But there would have been no performance issues what so ever, if nobody have had the guts to complain. So performance problems is about expectancy – expected service compared to received .

I do this as I always I have done, and now the silly “thing” don’t let me go on, because it is busy doing something else compared to what I am used to. This is the situation where frustration is born, and some of us get so irritated that we complain, and then it sooner or mostly later becomes an issue, called a “performance problem”.

I do this as I always I have done, and now the silly “thing” don’t let me go on, because it is busy doing something else compared to what I am used to. This is the situation where frustration is born, and some of us get so irritated that we complain, and then it sooner or mostly later becomes an issue, called a “performance problem”.

Problem? The response time in any computing situation could be split up in two parts, service time and queuing time. The service time is what it takes to do what I ask for, which means some time with the CPU, and the time it takes for the disk to get or store some data and it may also include the time involved in getting or updating some information in another computer. There are basically three resources you ask for: CPU, some disk activity on your own computer and some activity from “out there”. If I always was priority one I would always have the same response time = service time, the time it takes for those silly machines to do what I asked for, i.e. what you expect most of the time. But what you always get is service time + queuing time, someone else seems to be more important than you are. So for one reason or another – you have to wait in some of these queues… So analysing performance problems, is analysing queues and waiting situations. In some cases it could be attacked by shrinking the service time, since all programs could always be improved in some way.

Could such analysis then be done by a silly computer? My first answer would be no. But I might be wrong… There are tools that work with both issues. If someone who knows how to analyse stuff like this could explain what they do, then it might be possible to at least make my own computer a useful tool helping to solve these types of issues, AI as it is called. The resources to investigate are only three, my own CPU and disk, and then these strange computers outside my desk. Find the reason and then apply corrective action, the first one is often the most tricky.

If your car is broken, and you just leave it and say, “There is a problem”… You give no explanation on where or why, you give the guy solving this problem a lot more to do… If you say “I have trouble with the windshield wiper when I push this button and this occurs…”. Then you have defined the problem much more narrow, and probably it is fixed when you pick up your car.

The same story is true about response time problems, if you just say “I have a problem”, that might not be helpful enough to solve it. But if you instead say I have a problem with my disk, it don’t do what it has done earlier, you will give the guy you ship it to a much easier job. Or if you just hint him on that this problems occur when I do this… And then nothing happens, and it only happens when I visit this site on internet…

The same story is true about response time problems, if you just say “I have a problem”, that might not be helpful enough to solve it. But if you instead say I have a problem with my disk, it don’t do what it has done earlier, you will give the guy you ship it to a much easier job. Or if you just hint him on that this problems occur when I do this… And then nothing happens, and it only happens when I visit this site on internet…

So – helping the guy trying to help you is always win – win!!

What is the problem?

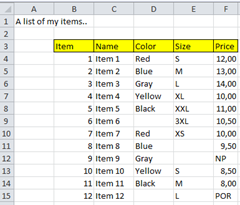

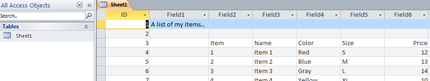

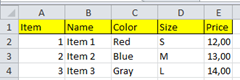

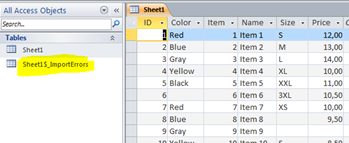

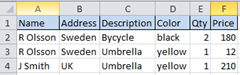

Since Office 97, might have been earlier, 97 was when I started to use these features. It has been very easy to get Excel data into MS Access. In Access there is a tool that does everything for you… You have a list of items in Excel with some properties defined:

They all have an item number and a name, some have colour and size some are missing these properties. In the price column are the prices where you use special coding where NP = No price, and POR = price on request.

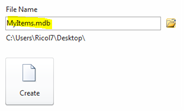

This kind of table is very easy to import into MS Access as a  table. First create a new database, use 2003 format and mdb. If you don’t must use the 2007-format, and in all cases you don’t need it – my advice is skip it. MS has put in some annoying restrictions, so avoid “.accdb”.

table. First create a new database, use 2003 format and mdb. If you don’t must use the 2007-format, and in all cases you don’t need it – my advice is skip it. MS has put in some annoying restrictions, so avoid “.accdb”.

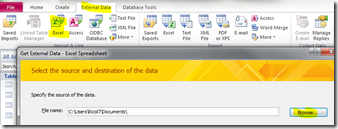

Select “External Data” and the Excel option and click the “Browse”-button to show where your Excel sheet is located.

Select “External Data” and the Excel option and click the “Browse”-button to show where your Excel sheet is located.

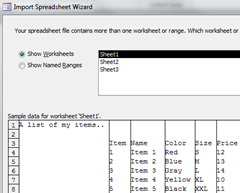

You are now running a wizard that guides you thru the process of importing your spread sheet into a database table. You will get one of these silly warnings “A potential security..” , but click “Open”.

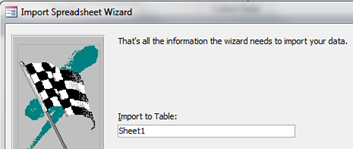

Brave you. You get up a popup window telling you, and you click “Next”… and then finally “Finish”on:

Brave you. You get up a popup window telling you, and you click “Next”… and then finally “Finish”on:

You open the table in MS Access, and you get what you asked for but not what you expected.

You have to comply with the rules, it imported everything that was there. After that lesson learned you get rid of the first two lines and the empty A-column:

You have to comply with the rules, it imported everything that was there. After that lesson learned you get rid of the first two lines and the empty A-column:

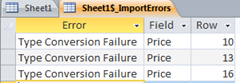

You redo all the “Next”-clicks and it is almost all there in a relational table. There is a table called “Sheet1_ImportErrors” that tells you another story…

You redo all the “Next”-clicks and it is almost all there in a relational table. There is a table called “Sheet1_ImportErrors” that tells you another story…

The wizard did not like some of your data so it neglected it. This is how it works in reality, nice wizard gives you 95% of what you want. How to solve it completely, you don’t have a clue. The true story behind it is that a table in a spread sheet is not always easy to convert to a relational table. The relational table have rules where the Excel tables have none. The wizard tries to overcome these complexities which often ends up – you are left with some problems you don’t know how to handle. In this case it was the price column, which the wizard on good reason’s believed contained numbers, but… So when it found NP and POR, it gave up and neglected these values. Somebody from “the relational world” could say, you can’t do like that, numbers are numbers and text is text…

As an Excel user you don’t understand nothing.

Reading more about the import tool in Access.

Using Excel as a database

A lot is written on the web about using Excel as a database. The opinions diverge about the pros and cons for this approach. You could also read that Excel is “The World’s Most Popular Database” but if I Google on that I get “MY SQL”. If you add Excel to the search – you will only get Excel-results. Nor Google or anybody one else knows the answer to the question “Which is the most popular database?” The answer of course also depends on how you define what a database is.

A lot is written on the web about using Excel as a database. The opinions diverge about the pros and cons for this approach. You could also read that Excel is “The World’s Most Popular Database” but if I Google on that I get “MY SQL”. If you add Excel to the search – you will only get Excel-results. Nor Google or anybody one else knows the answer to the question “Which is the most popular database?” The answer of course also depends on how you define what a database is.

In my work I often come into situation where Excel is used for the storage of data for many mission critical application in business. Depending on size and complexity this approach is more or less successful, often used by “desperate” professionals, needing their job to be done, and lacking resources and time to get there needs satisfied by a professional developer.

In my work I often come into situation where Excel is used for the storage of data for many mission critical application in business. Depending on size and complexity this approach is more or less successful, often used by “desperate” professionals, needing their job to be done, and lacking resources and time to get there needs satisfied by a professional developer.

Being brought up as a database developer I used to deliver my DB-solutions with a form based UI, in MS Access or with HTML. Many users feel awkward in Access, lacking the control Excel gives you in that you always self makes the decision when it is time to save. The HTML solutions almost always get very expensive, but has the great feature of being available for everyone.

This year I have been into three projects where a database has been a very appreciated solution for replacing a bunch of spread sheets. The governments in Sweden has done a lot of investigations of the quality of the water in many of the lakes and rivers. Some samples are collected, it could be water, mud or fishes. The samples are sent to different laboratories and the result is sent back as a spread sheet. During the years thousands of different observations are collected and many qualified professionals has spent a lot of time copying and pasting the figures forth and back trying to understand relationship among the environmental disasters. This spring I have spent time with a company having arranged all their price lists in some 100 sheets in 50 workbooks, containing the prices for about 100 000 items. This system was used for calculating new prices, interacting with the ERP-system and updating the invoice system when changes to prices occurred. The last example is from a manufacturer in the food business, where Excel is used as an intermediate step for storing data obtained from scanned invoices in paper. These projects have given me a deep insight into different aspects of moving data from Excel into a database, which I aim to share in some post on my blog.

A database and all problems are gone..

Databases are often modelled as a cylinder. I have often wondered why? The shape of a cylinder is full of harmony or is it just the fact that disk storage until recently always has had that physical shape?

Relational databases was invented some thirty years ago to better adapt to reality than the traditional hierarchical model had done so far. Many business problems could be formalized to the concept of tables and relations between tables, so I guess it is why this model has been so successful, at least if you look at the history of a company like Oracle.

Personalized by a person like Larry Ellison it is not without controversy..

To introduce new inventions strong entrepreneurs are needed. So fact is RDB:s are popular in many cases as they had been for at least more than twenty years. The reason simply is they do adapt to reality better in many cases, not all. Confirming close to reality also often means quick payback of investment and direct business value. So let us try to find the reason why.

To introduce new inventions strong entrepreneurs are needed. So fact is RDB:s are popular in many cases as they had been for at least more than twenty years. The reason simply is they do adapt to reality better in many cases, not all. Confirming close to reality also often means quick payback of investment and direct business value. So let us try to find the reason why.

In Excel you always feel you have a good control when you see the order in a table. You describe some items with similar properties and you order them row wise and collect the same properties in the same columns. The same concept apply in a relation database. The big difference is that in Excel the rows always appear in the same order – in an RDB the order is “undefined”.  The rows are in the table, but it is up to you to say in which order you want them, in SQL this is called “Order by”. If you just ask for them they could appear in any order.. They are there that is all!

The rows are in the table, but it is up to you to say in which order you want them, in SQL this is called “Order by”. If you just ask for them they could appear in any order.. They are there that is all!

In Excel it is no big issue if you for some reason or another need to store some comments in a column that is mostly used for numbers, you just write them in. In an RDB this is a violation against the rules, is a column for numeric data you have to confirm!! This have some disadvantages but also some big advantages, if you want to have a silly computer helping you – you need consistency. In Excel there is no problem in storing item price in column B in one sheet and storing it in column D in another. The human eye immediately sees the difference and understands, if you want to explain that fact for a stupid computer you get some extra trouble. So the RDB model asks for similar information in the same column. So Excel and RDB tables look the same but behind the scene some different behaviours appear.

These differences makes it uncomfortable for us humans, when we have to change the conceptual model in our brains. We expect one behaviour from the computer and receives another…

It is called frustration, by all means…

But there are more to come. In many business problems we have to define a single occurrence of something, for example one of our products or customer or what so ever. In Excel we have very limit help in this concept, there could be many duplicates of a row in a spread sheet, Excel does not care. In a database model you could ask for help in this context, it is called Primary key. You have to define one of the properties of your entity as unique and put it into one of the columns in your model. You say I don’t want duplicates in this column, if I put them in, please don’t allow it. The big advantage of this is that you could never miss where to put updates if something changes, for example the supplier of a product. You store it in one row and when you ask you will find it there. In Excel you put it in one of the rows and next time you ask you look in the other, getting the wrong answer, because that row you have forgotten to update. This is the principle of not storing the same information in more than in one place. The true strength of the relational model. One information – one place!! This is the fundament for the reliability of databases.

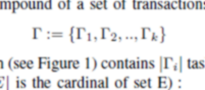

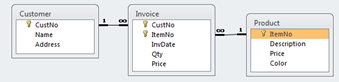

Sometimes reality needs two or more tables to describe a reasonable model of what happens. We have customers buying products from us. So we have a table of customers with some properties as address etc, and we have products with properties like colour that always is the same and price that changes, so in an RDB we store these entities in different tables.

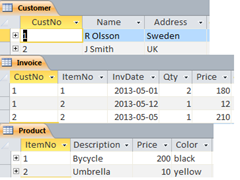

Sometimes reality needs two or more tables to describe a reasonable model of what happens. We have customers buying products from us. So we have a table of customers with some properties as address etc, and we have products with properties like colour that always is the same and price that changes, so in an RDB we store these entities in different tables.  When the customers buys something we invoice them, so we need a separate table for this. So in all a first model ends up with three tables, a table with customers, and one with our products and then one table telling us when some of our customers have bought something from us so we could invoice them. Since we can’t sell a product we don’t have, we want our model to keep track on that, and we don’t want to sell to customers we don’t trust so we collect trustable in the Customer table. Now we don’t want the invoice table to contain an invoice of customer 3 buying item 3 since these doesn’t exist. In an RDB we could set up rules saying we don’t want to register invoices that are impossible, when invoicing please check we confirm to the model, not allowing 3,3. This type of rule is called a relation between tables and the property we say is called “referential integrity”, disallowing orphans to be stored. That is an invoice to a customer that don’t exist or on a product we don’t sell. This type of checking is impossible to achieve from Excel and it is our own burden to keep track of such issues. If we confirm to the relational model the computer takes care of such checking. A traditional Excel-model for this situation would probably look like:

When the customers buys something we invoice them, so we need a separate table for this. So in all a first model ends up with three tables, a table with customers, and one with our products and then one table telling us when some of our customers have bought something from us so we could invoice them. Since we can’t sell a product we don’t have, we want our model to keep track on that, and we don’t want to sell to customers we don’t trust so we collect trustable in the Customer table. Now we don’t want the invoice table to contain an invoice of customer 3 buying item 3 since these doesn’t exist. In an RDB we could set up rules saying we don’t want to register invoices that are impossible, when invoicing please check we confirm to the model, not allowing 3,3. This type of rule is called a relation between tables and the property we say is called “referential integrity”, disallowing orphans to be stored. That is an invoice to a customer that don’t exist or on a product we don’t sell. This type of checking is impossible to achieve from Excel and it is our own burden to keep track of such issues. If we confirm to the relational model the computer takes care of such checking. A traditional Excel-model for this situation would probably look like:

Which have the same information content, but is much more troublesome to maintain. If R Olsson moves to Denmark you must change in two places, but in an well defined RDB you change in one place only. This process is also called “Normalization” – storing one piece of information in just one place. So why then Price in both Product and Invoice table you say, that must be against the rules of normalization. Fact is these are not the same, the price in the product table is a guideline for our sales people (recommended price), but as you know the customer is always right, so when we sell with a discount, which happens, the invoice could end up in another price (actual price). So the name is the same but in reality they reflect two different aspects, that is why they should appear in two different tables, being almost the “same”. If you look closely to the example above you also see something strange, J Smith bought an umbrella for 210, this must be a typing error. These types of errors no computer could help us with, so even if the RDB-model contains a lot of help you still need humans to overlook what is happens in the model. So repeatable checking and updating by copy and paste you could delegate to the computer, but not all issues of course..

Sorry forgot the cascading deletes.. If you define a relation between two tables you could also give rules on what you want to happen in certain situations. If you decide to stop selling a product you might want to delete that row from the product table. What should then happen to the invoice rows in the Invoice table? If you just define a relation with referential integrity the database will disallow you to delete the product, since there are rows in the invoice table with it. If you specify cascade delete the database will automatically delete all rows in the Invoice table on that product for you. This might though not always be what you want.. If you want to keep track on sales two years back. But if the dependent table is a price list giving actual price for different customer this seems to be a good idea. So it is up to you to select the correct option for the business situation you want to model.

Sorry forgot the cascading deletes.. If you define a relation between two tables you could also give rules on what you want to happen in certain situations. If you decide to stop selling a product you might want to delete that row from the product table. What should then happen to the invoice rows in the Invoice table? If you just define a relation with referential integrity the database will disallow you to delete the product, since there are rows in the invoice table with it. If you specify cascade delete the database will automatically delete all rows in the Invoice table on that product for you. This might though not always be what you want.. If you want to keep track on sales two years back. But if the dependent table is a price list giving actual price for different customer this seems to be a good idea. So it is up to you to select the correct option for the business situation you want to model.

Database or Excel–why not both?

Now and then in my profession I arrive in customer situations where this question arises. Lately it has been more and more often. I meet customers who have successfully solved business problems on a departmental level for many years with a sophisticated Excel-solution as a complement to their ERP-system. Then just of a sudden the “guru” disappears and many problems arise.

They call me to solve it… They hope and “pray” I will remove their headaches.

Being a DB-guy for many years I often find solutions with database-technology, there might be others that I miss – who knows? You are always caught in middle of the limitations of your own experiences and brain.

Being a DB-guy for many years I often find solutions with database-technology, there might be others that I miss – who knows? You are always caught in middle of the limitations of your own experiences and brain.

The last decade I have seen Excel evolve from a rather isolated end user tool to a professional development platform for making very attractive business solutions. Excel nowadays is very open for integration with content in corporate databases either thru a data warehouse or in many cases directly to the corporate ERP-system. Excel has been remodelled to be a BI-tool, with the “little” advantage that it is well known by so many.

Returning to the initial question – today you don’t need to choose one or the other – they work very well together.

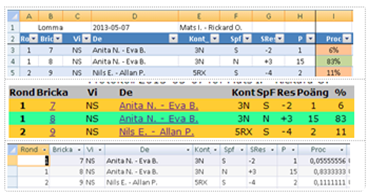

Many sheets in Excel, looks like tables in a database or even like tables in HTML. To the right you see me and my partners failures when playing bridge the other night. Same boring facts presented in 3 different shapes. Excel, HTML and Access. What’s the difference? The human eye is probably most comfortable with the middle approach, HTML – made for presentation.

Information in databases are often as in HTML – tables, so also in Excel. So, what is the difference? If correctly sorted Excel is in the middle and HTML at top… It is all about presentation! HTML solutions are focused on presentation and DB solutions are most often not. Excel is something in the middle, both order and presentation, I think that is why many people feel it is the best compromise. HTML is worst when it comes to flexibility and possibility to change, Excel manages both to some extent depending on who is in charge. Databases have it all, but to a high investment in “knowing how”.

So let us skip HTML for maintaining tables and focus on relational databases compared to Excel. Excel has a lot of flexibility that RDB:s lack, at least with a reasonable investment in knowledge. Excel is in many views a very good place to store information as long as you keep it in one sheet, you have full control and it is easy to handle. When you reach 20 sheets or more you are probably lost, at least if you are the person to inherit something invented by an Excel-“guru” who has just left the company… What are the relations between all these sheets… Too many “IT-gurus” call this state “Excel-hell”. What actually happens is that your company could loose confidence among your customers, since they feel you are “out of control”. They ask for the price of a product and they get two different answers depending on who they ask. There are many truths of the same fact. The basic problem is that the same “fact” is stored in more than one place, when so problems arise.

So let us skip HTML for maintaining tables and focus on relational databases compared to Excel. Excel has a lot of flexibility that RDB:s lack, at least with a reasonable investment in knowledge. Excel is in many views a very good place to store information as long as you keep it in one sheet, you have full control and it is easy to handle. When you reach 20 sheets or more you are probably lost, at least if you are the person to inherit something invented by an Excel-“guru” who has just left the company… What are the relations between all these sheets… Too many “IT-gurus” call this state “Excel-hell”. What actually happens is that your company could loose confidence among your customers, since they feel you are “out of control”. They ask for the price of a product and they get two different answers depending on who they ask. There are many truths of the same fact. The basic problem is that the same “fact” is stored in more than one place, when so problems arise.

In my next article I will try to explain all this in more technical terms. “Normalization”, “Relations”, “Cascading Deletes”… technical terms that have a background in business logic and the way we act and feel.

Follow up new products with Movex and Excel(2)

As always to create a meaningful model for follow up, you need comparison since figures alone mostly are without meaning giving you no clue on what is good or what is bad. Discussing these topics with people working with new product introduction has given me this understanding. Sometimes nothing happens for a period of time we just have the product for delivery. After a while or sometimes before we get the product we plan for a campaign, where we put in marketing efforts to push it. This often leads to a period with a rather steep selling curve. Then after a while everything stabilizes, we change focus and some customers liked the idea and keeps on buying and new arrives. We go into the “normal” accelerant phase which could go on for a year or two with the marketing effort we believe the idea is worth, else it will be a drop out with some loss of confidence with some customers.

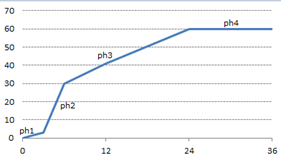

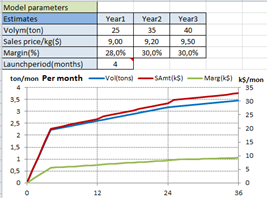

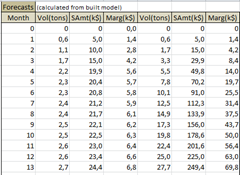

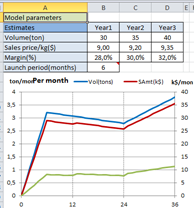

Trying to make all these words meaning for a computer could be something like the curve to the left. ph1 is the initial testing, which should be ignored for monitoring. ph2 is the intense marketing effort giving a significant start of sales. ph3 where marketing effort declines and the product goes into it’s own growth phase with “normal marketing focus”. ph4 is where the product has gone into it’s mature phase, where loss of sales still is compensated of new customers arriving. The four lines giving a good approximation of the start of the bell curve.

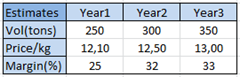

It could be represented by a model described by three lines representing the part interesting for follow up (skipping ph1 which also makes ph2 start at 0). When planning for a new product most organizations try to make estimates on volume and margin based on earlier experience or on market research ending up in tables as below.  Problem one, these tables are always too optimistic for the first year, but that is another story. Most often are the decision to give the new product a try based on these estimates. Could then these figures be combined with the curve to create a model for forecasting the interesting parameters: volume, sales amount and margin. If so we have the comparison values we were looking for, to create a acceptable model for follow up. The only parameter needed is an estimate for the length in months of ph2, the intense marketing period. With this in place a simple forecasting model for monthly values of all then KPI:s could easily be built.

Problem one, these tables are always too optimistic for the first year, but that is another story. Most often are the decision to give the new product a try based on these estimates. Could then these figures be combined with the curve to create a model for forecasting the interesting parameters: volume, sales amount and margin. If so we have the comparison values we were looking for, to create a acceptable model for follow up. The only parameter needed is an estimate for the length in months of ph2, the intense marketing period. With this in place a simple forecasting model for monthly values of all then KPI:s could easily be built.

The model gives you forecast values for KPI:s of interest.

If you would like to test the model, click this link.

The model is fast to give you a hint when you overestimate first year volume. People tell you that the initial marketing phase will long for six month and you need more volume the first year so you put it up to 30. The model react and give you declining growth after that. This could be a correct opinion about what you expect, but if not you have to modify so the curve gives what you expect. This trivial model gives you a hint for what is mathematically possible.

As all mathematical models it is always an approximation, to make it useful the deviations should be acceptable.

Follow up new products with Movex and Excel(1)

I am planning to write a series of articles describing an application i Excel used to follow product introduction when your ERP-system is Movex. The idea is based on a running application developed together with one of my customers.

Most theory published about product life cycle shows a bell curve inspired of the biological life curve also often representing quality of human life depending on age. This model is just conceptual, since real life has a lot more complexity into it.

When introducing new products into a market much focus is on the growth part of the curve. Do we get the payback we need for the project or should we stop the introduction before it is to late? Since a new product is new, often there is very little experience to rely on. The expectation of sales must be forecasted in one way or another. If the new product only is a replacement of an older the sales history of the old product could probably be useful. If similar product has been introduced earlier historical patterns could also be used. Sometimes you introduce a new concept built up by many products with internal relations, and your interest is more on the group as a whole.

In the start-up phase you may have more focus on volume, later on the focus will change to revenue and margin. If the x-axis is in days, weeks or months depends both on the product and in which phase the product is in. The model must be capable of many things to prove helpful in the complex process of introducing new product. I hope that the concepts discussed and the application made available will be a good starting point for developing the basic ideas into a easy and useful tool, but to make this happen we will need advise from you, so don’t hesitate to comment.

Not so Big data

For mortals like me, “Big data” is bigger than I am used to, which also seems to be the accepted definition of this loosely defined term almost everybody seems to use, with diverse definition which always is the case with buzz words. For most of us “big” is GB and above, at least when handling ordinary business problems. What does then these new technologies mean to us mortals?

For mortals like me, “Big data” is bigger than I am used to, which also seems to be the accepted definition of this loosely defined term almost everybody seems to use, with diverse definition which always is the case with buzz words. For most of us “big” is GB and above, at least when handling ordinary business problems. What does then these new technologies mean to us mortals?

As an engineer I have always been fascinated of breaking barriers, which is what happens with the new technologies around “Big data”. What does they mean to me in my job with my customers? Should or must I adapt? “Big data” would never have occurred if not the fantastic progress in “areal density” had occurred during the last decades. So for me, data is not so much bigger, but calls for a big change.

Big changes are always pushed on us, if we like them or not, this goes for bulbs or source separation of garbage. Most of us are lazy, change means action. We like to stroll around the same way we’ve always done, it is much more comfortable.

In the BI-area where I earn my living, the new technology means more or less a revolution, even if most people involved still not seams to have realized that. In most common sizes of DW the need for summarizing data to produce acceptable response times have vanished. This means that central technology in this area like cubes and OLAP no more is needed, and the need of consultants keen on MDX will disappear in the next five years to come. Traditional actors like Cognos(IBM) and Business Objects(SAP) have to react to rookies like QlickView, Tableau and PowerPivot which to my understanding do well without using cubes and MDX. ![]() They simply store most data in RAM and calculates what is asked for on demand. This is in my mind making full use of new technology, based on the big changes in prices of storage and multiprocessor capacity the last decade . This shift also makes developing new applications in the BI-area so much cheaper. The new actors all focus on visualisation and self-service in their marketing, which is made possible with technological leadership.

They simply store most data in RAM and calculates what is asked for on demand. This is in my mind making full use of new technology, based on the big changes in prices of storage and multiprocessor capacity the last decade . This shift also makes developing new applications in the BI-area so much cheaper. The new actors all focus on visualisation and self-service in their marketing, which is made possible with technological leadership.

I guess you also drive Audi Quattro like me: “Vorsprung durch Technik”….